LLM Resource Management and Governance

Druid provides a centralized, LLM-agnostic framework for managing connections to Large Language Models. This architecture decouples AI Agent logic from specific model providers, allowing you to configure, govern, and switch between models without modifying conversational flows.

By centralizing these resources, Druid ensures that all generative AI activity adheres to enterprise safety standards and is subject to the same Role-Based Access Control (RBAC) policies as the rest of the platform

LLM-Agnostic Orchestration

Druid allows you to unify multiple model providers into a single execution layer. You can configure AI Agents to utilize the following models based on your specific performance and residency requirements:

- Public Cloud Providers: Azure OpenAI, Anthropic Claude, Google Gemini, and Mistral.

- Open Source Models: LLaMA and other compatible frameworks.

- Private LLM: Druid’s proprietary Becus model or GPT OSS, which can be deployed fully on-premises for maximum data residency.

Centralized Governance

Configuring LLM resources centrally provides several governance advantages:

-

Credential Security: Define API endpoints and keys once in a secure administrative environment rather than hardcoding them into individual AI Agents.

-

Access Control: Use RBAC to restrict who can view, edit, or rotate LLM credentials.

-

Operational Consistency: Ensure that AI Agents features—such as LLM NLU, Machine Translation, and Response Streaming—all use authorized and governed model versions.

This unified approach simplifies configuration and ensures consistent access to your language models throughout your AI Agent implementation.

Different providers support different configuration options. For example, Azure OpenAI supports two authentication methods:

- API key

- OAuth (Microsoft Entra ID)

Other providers support only API keys.

Configuring LLM Resources

Centralizing your LLM resources ensures that credentials remain secure and that model updates can be managed globally.

Prerequisites for using OAuth for Azure OpenAI resources

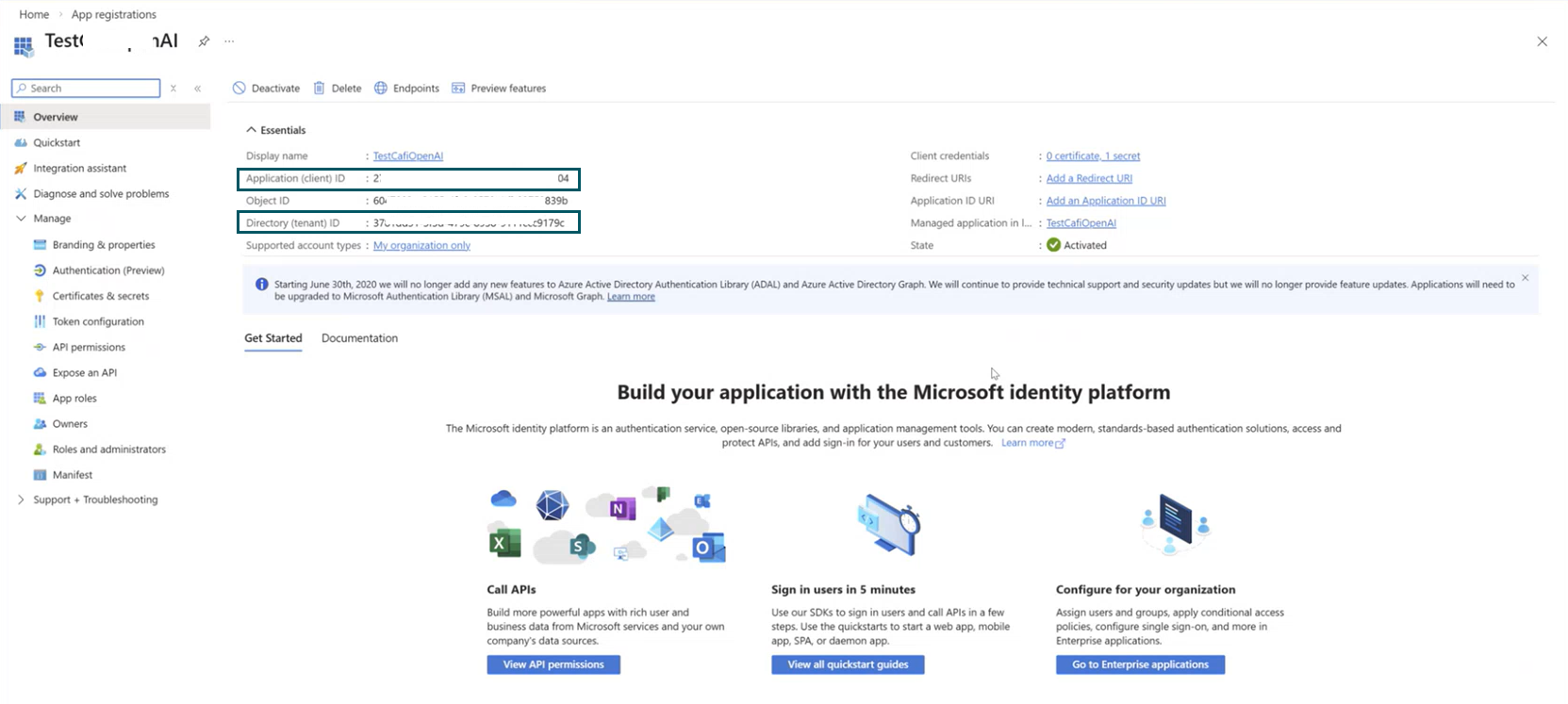

- Register an application in Microsoft Entra ID following the Microsoft instructions.

- After you register the application, note the following values:

- Application (client) ID

- Directory (tenant) ID

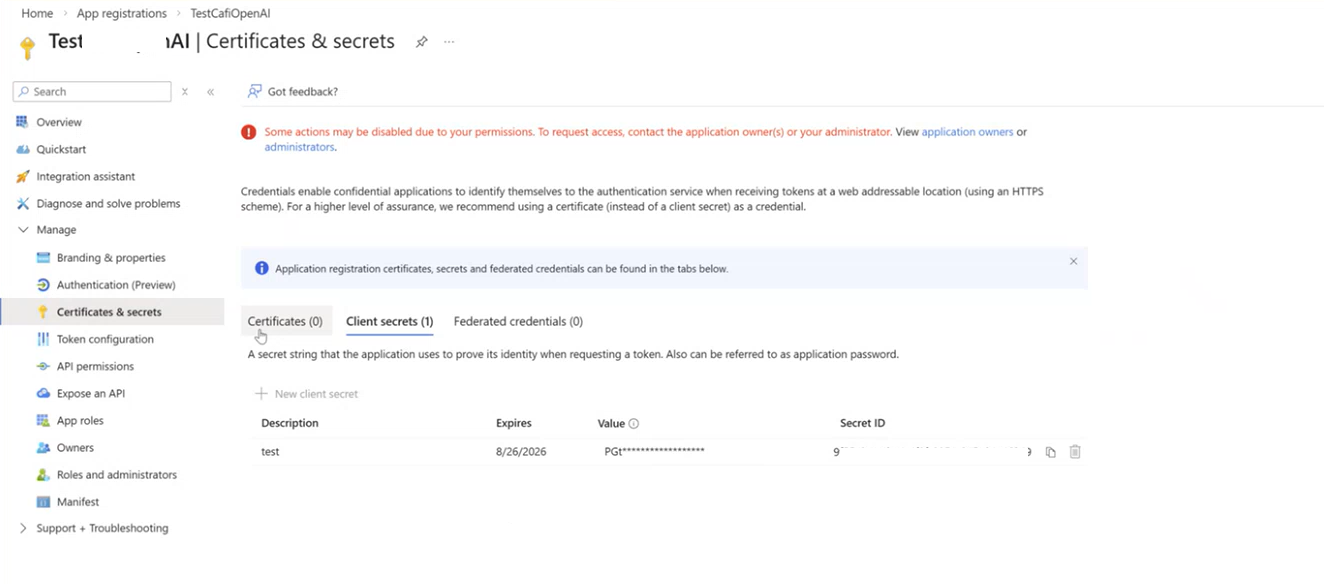

- Create a client secret for your app (Manage > Certificates & Secrets). When a new client secret is generated, copy the value as you'll need it in Druid.

- Grant the application access to Azure OpenAI:

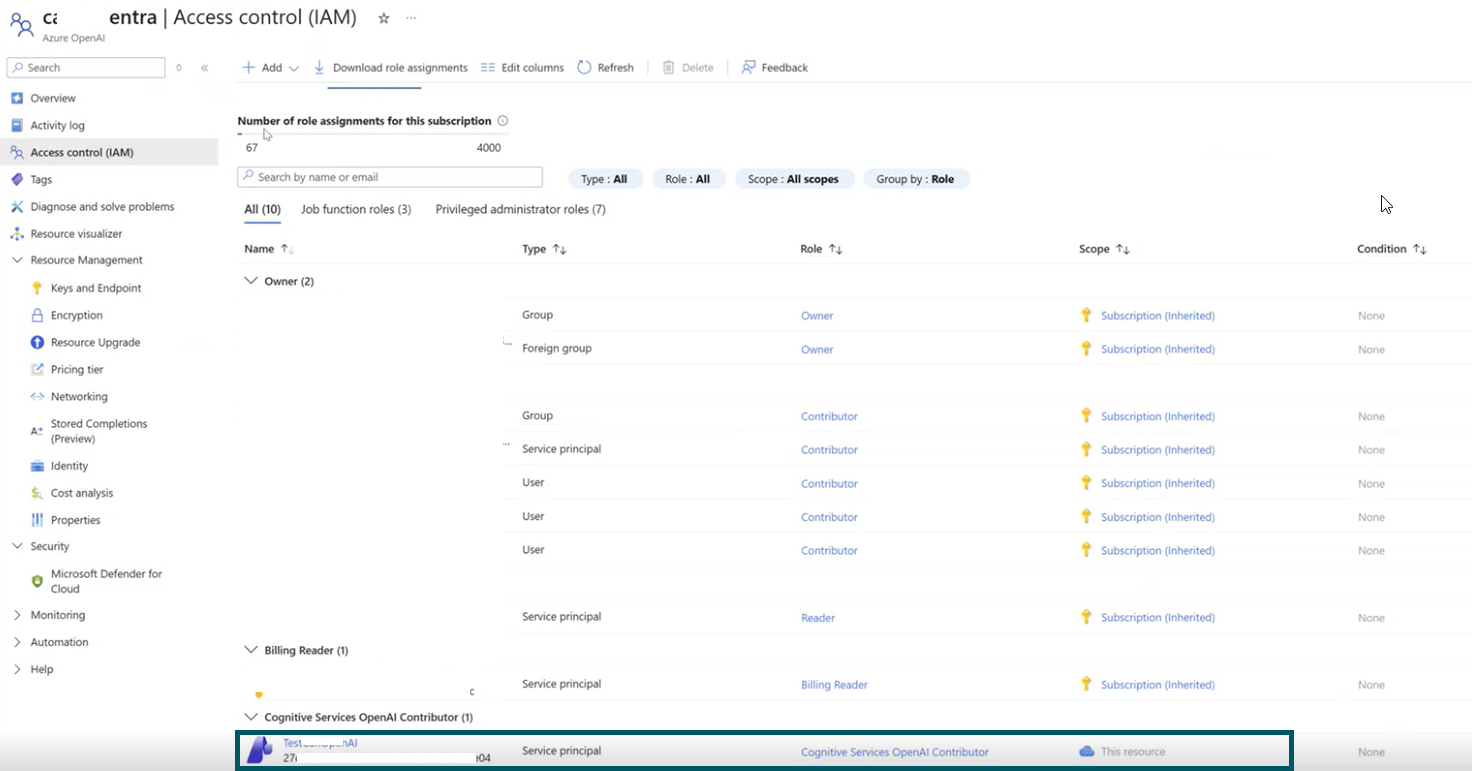

- Open your Azure OpenAI resource in the Azure portal.

- Select Access control (IAM).

- Select Add role assignment.

- Assign the role Cognitive Services OpenAI Contributor.

- Select Members.

- Choose Service principal.

- Select the application you registered.

- Select Review + assign.

You can find these values in the application Overview page.

Add a LLM resource

To establish a governed connection to an LLM provider, follow these steps:

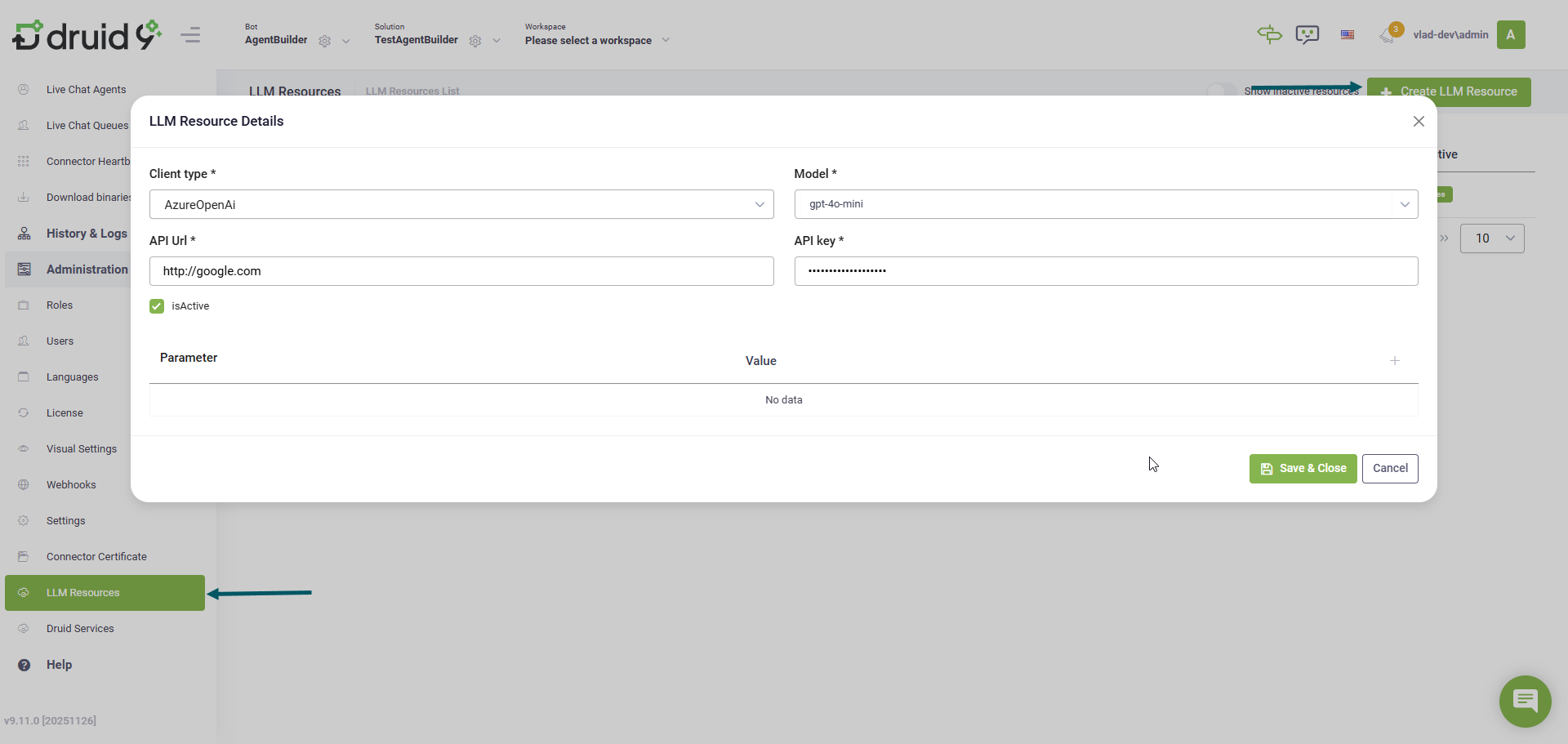

- Go to Administration > LLM Resources.

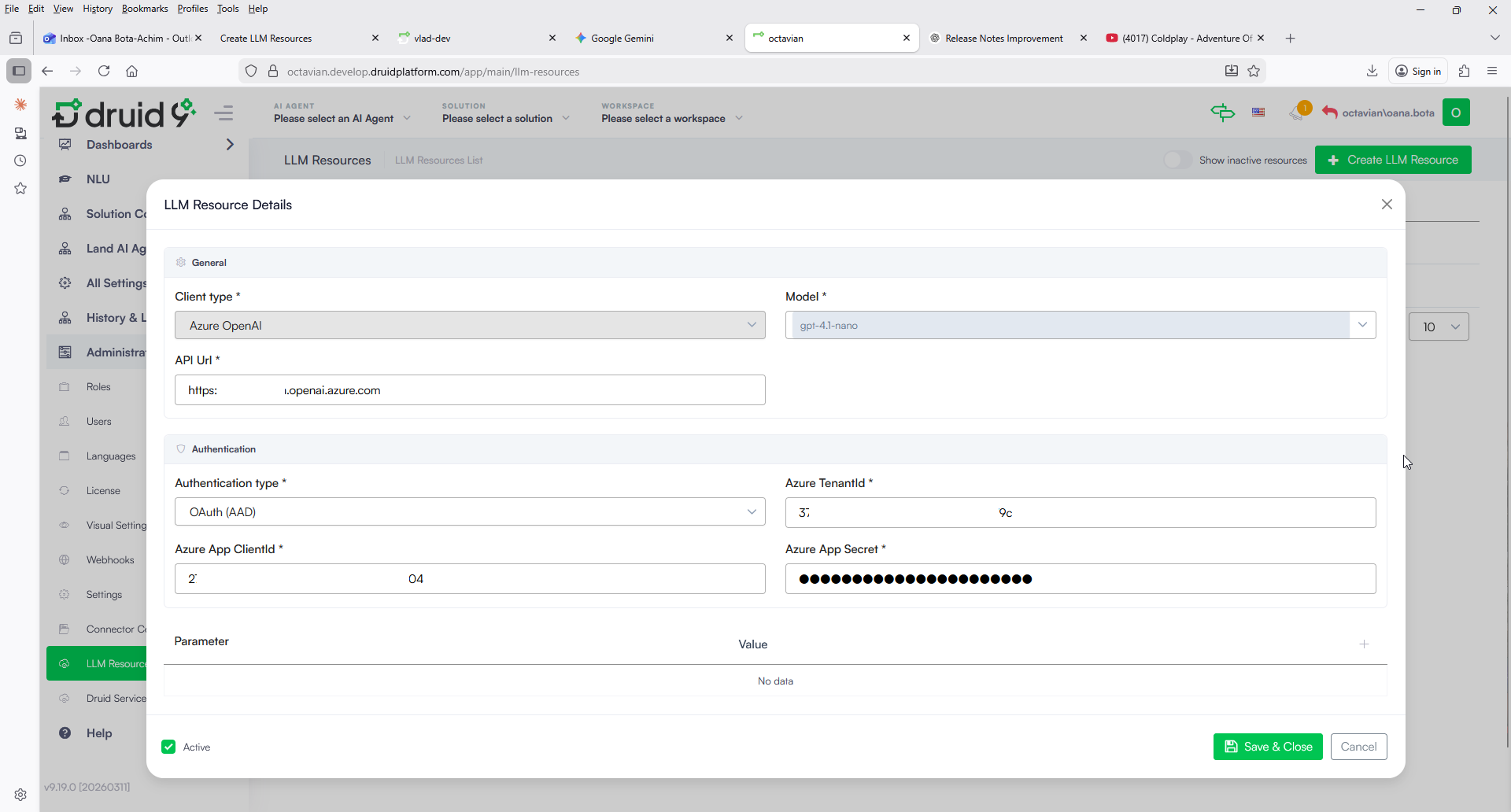

- Click the Create LLM Resource button. The LLM Resource Details modal appears.

- Complete the LLM resource details:

- Client Type: Select the type of LLM client you are using.

- Model: Select or enter the specific model name you intend to use.

- API Url: Enter the LLM service endpoint URL.

- API key: Enter the secret API key provided by your LLM service provider.

- API Key

- OAuth (AAD). Select OAuth (AAD) for enhanced security. Unlike a static API key, which is valid until rotated, OAuth uses short-lived tokens. When selected, you must provide the following values you copied from Azure:

-

Azure TenantId: The unique identifier of your Azure instance (the Directory (tenant) ID).

-

Azure App ClientId: The Application ID of your registered app (the Application (client) ID).

-

Azure App Secret: The client secret value generated for your app.

-

If you have configured the Client Type as Azure OpenAI with OAuth (AAD) authentication, you can define a custom authentication scope. If your configuration requires a scope other than the default (https://cognitiveservices.azure.com/.default), you must manually add the AuthenticationScope parameter to the settings table and enter your specific value.

- If you selected Google as Client Type, you also need to enter the location of the location for a Vertex AI and your Google project id.

- If you selected AwsBedrock as Client Type, you also nee to provide the following parameters: region and accessKeyId.

- Click Save & Close.

If you selected Azure OpenAI as Client Type, two authentication options are available:

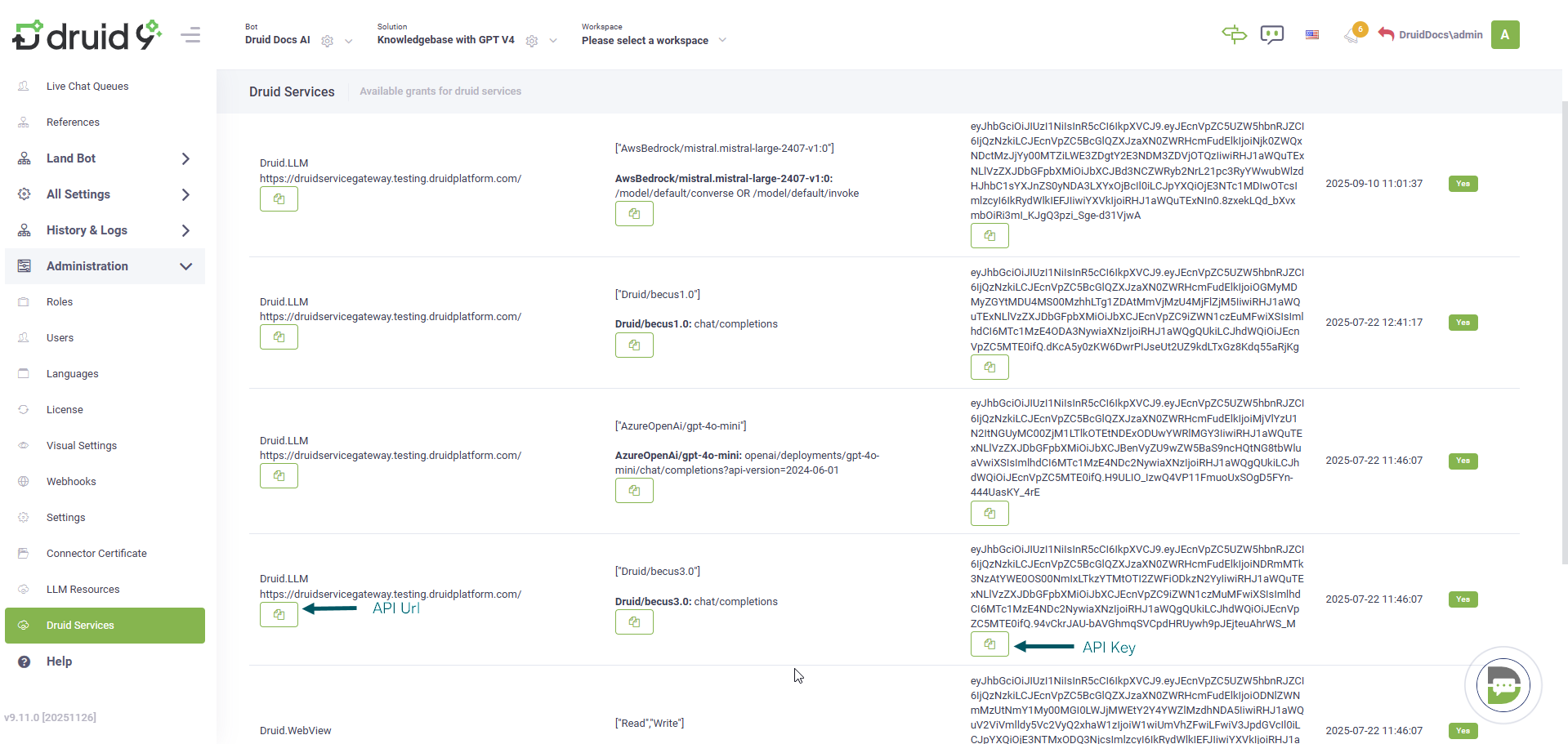

LLM Governance

Once configured, these resources are protected by the platform's governance framework. Access to view, edit, or delete LLM resources is restricted based on the user's assigned RBAC policy. This ensures that sensitive API keys are only accessible to authorized platform administrators.

The LLM resource becomes the engine for your AI Response Guardrails. This allows the platform to apply Hallucination Prevention (via Knowledgebase with GPT V4) and Content and Policy Enforcement consistently, regardless of whether the underlying model is hosted in the cloud or on-premises.